|

To be more precise, I want to scrape a sports-result. New info will be scraped but also 'old' information will get scraped again, since the python-script will run each hour. The scraped results should get inserted into an SQLite table. ratings?įor a complete list of requirements and the detailed code for this project, check out my GitHub repository here. I want to scrape some specific webpages on a regular basis (e.g.

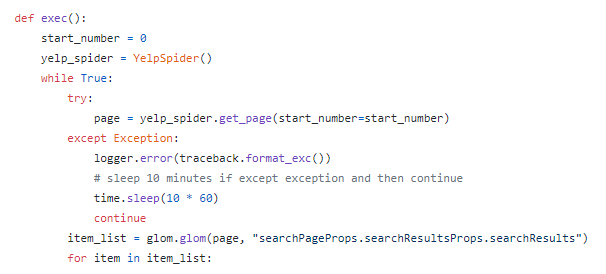

Is there a connection between source material of an anime vs.What is the studio with the highest average rating for the anime they've produced?.Is there a correlation between the number of genres vs. As I said before, I’ll cover the python, SQL, HTML, etc.What are the top 10 genres by popularity and rating?.I will also do a bit of data analysis using SQL and pandas to explore the following questions: You take those web pages and extract information from them. Step 1 Creating a Basic Scraper Scraping is a two step process: You systematically find and download web pages. I am going to showcase the methodology of scraping this website into a SQLite database that can be easily queried. You can follow How To Install and Set Up a Local Programming Environment for Python 3 to configure everything you need. This website is similar to IMDB, but it is focused on Japanese animation. The target for this project will be, an anime review website where users can sign up and give a review and/or a rating on various animes. We also want to scrape the website in such a way that follows the "tidy data" format based on the paper by Hadley Wickham. The idea is to come up with a generic process that can be used for most websites and turn the internet itself into a data source. In this notebook, I will focus on web scraping using BeautifulSoup. We can use the find_all class of the BeautifulSoup.Data is all around us, but getting that data and processing it into a readable format is usually the most time consuming part of any data pipeline. Now we have to find all the p tags present in this class. We can see that the content of the page is under the tag. On again inspecting the HTML of our website – So our next task is to find only the content from the above-parsed HTML.

We have got all the content from the site but you can see that all the images and links are also scraped. In our case, it will find all the div having class as entry-content. Python has become one of the most popular web scraping languages due in part to the various web libraries that have been created for it. This class will find the given tag with the given attribute. In the above image, we can see that all the content of the page is under the div with class entry-content. First, let’s inspect the webpage we want to scrape. The website we want to scrape contains a lot of text so now let’s scrape all those content. The soup object contains all the data in the nested structure which could be programmatically extracted. Now, we would like to extract some useful data from the HTML content.

Output: Python Programming Language - GeeksforGeeks Python program to convert a list to string.Sometimes, data might also be saved in an unconventional format, such as PDF. How to get column names in Pandas dataframe Data could be stored in popular SQL databases, such as PostgreSQL, MySQL, or an old-fashioned excel spreadsheet.Adding new column to existing DataFrame in Pandas.Why Kotlin will replace Java for Android App Development.Kotlin | Language for Android, now Official by Google.Top Programming Languages for Android App Development.Android App Development Fundamentals for Beginners.How to create a COVID-19 Tracker Android App.How to create a COVID19 Data Representation GUI?.Scraping Covid-19 statistics using BeautifulSoup.Downloading files from web using Python.Create GUI for Downloading Youtube Video using Python.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed